Technical Background

Delivering the dream

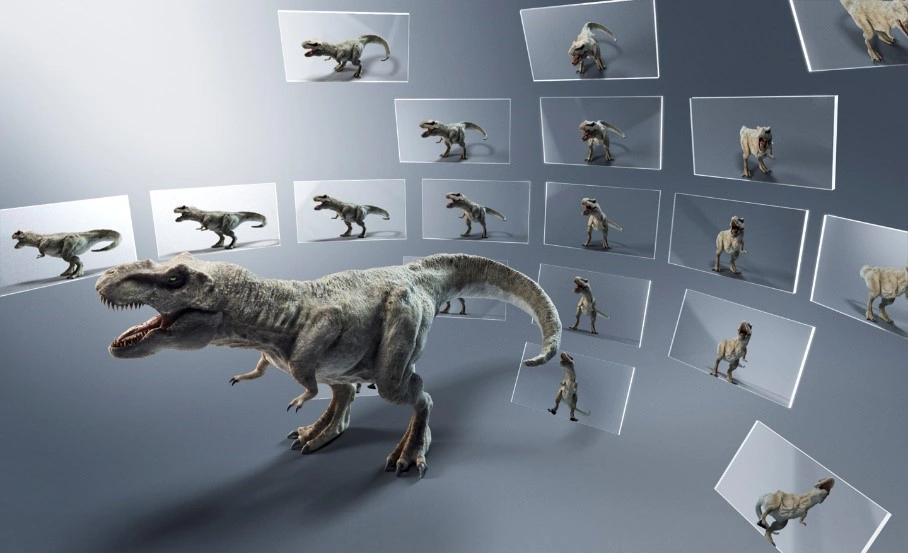

Science fiction movies like the original Star Wars depict volumetric displays showing 3D objects that appear to occupy real space, just as in life. You can examine the top, bottom and sides of displayed objects when you move your head, bringing them dramatically closer to the appearance of physical reality.

Of course, in this dream scenario, there are no 3D glasses.

In reality, such a display would be a game-changer for anyone who designs 3D objects: industrial designers, architects, engineers, game developers and computer graphics artists for motion pictures. Such a display would also constitute a breakthrough for detailed scientific visualization of 3D objects in biochemistry, geology, Geographic Information Systems and other disciplines.

Sony’s Spatial Reality Display is the realization of that dream.

Sony’s Spatial Reality Display works with a PC and a 3D computer graphics platform to deliver glasses-free stereoscopic 3D viewing and images that pivot immediately in response to head movement. The result is visual magic: an overwhelming impression of 3D reality. Even jaded design and computer graphics professionals are blown away.

How it works

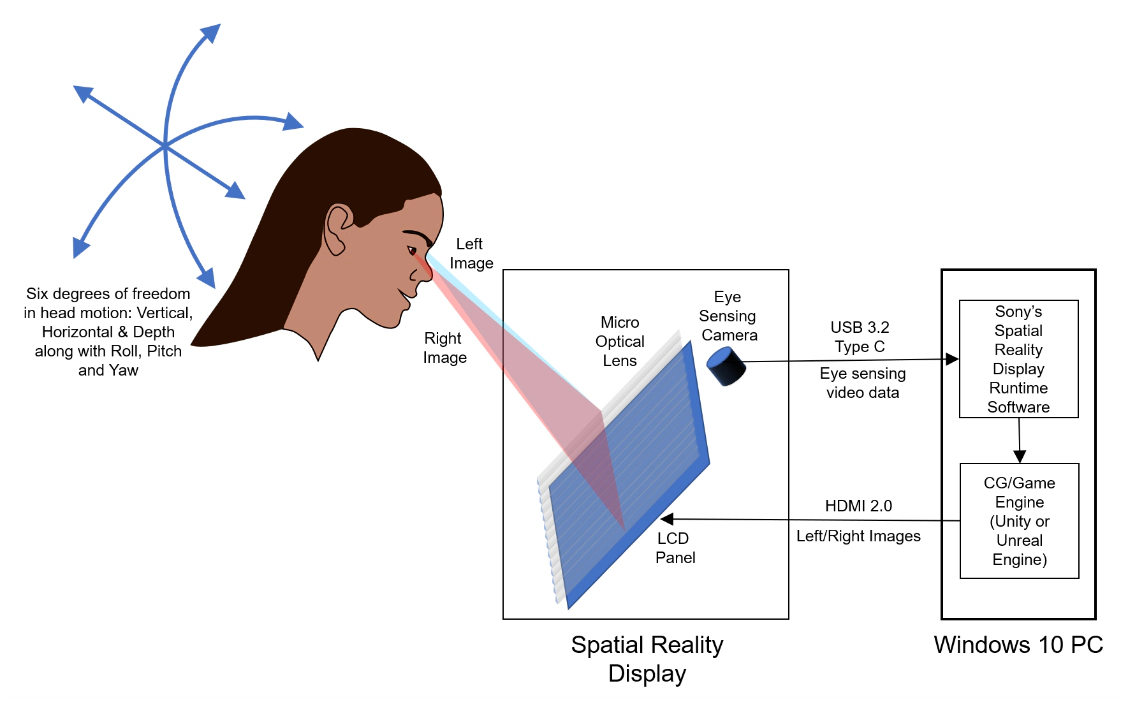

A breakthrough for viewing 3D computer graphics, the ELF-SR1 Spatial Reality Display consists of a 15.6” diagonal 4K LCD panel angled in the supplied stand at 45°. The panel, which has over 8 million pixels, displays independent left- and right-eye images, each with about half the pixels. A micro optical lens in front of the LCD panel separates the two images and directs each to the correct eye. This creates the powerful illusion of a 3D scene that extends in front of the screen surface. While impressive, the effect becomes even more compelling as you move your head. The system immediately pivots the 3D scene in response to movement, maintaining the illusion of real 3D objects. You’re free to look around, exploring the scene from different angles.

The secret is an eye sensing camera built into the bezel of the display. This outputs video data via USB 3.2 to a Windows® 10 PC. An advanced real-time rendering algorithm in Sony’s Spatial Reality Display Runtime Software interprets the eye sensing data and directs supported game engines to immediately pivot the scene according to your head motions.

The Spatial Reality Display is supported by two of the world’s top 3D content production platforms: Unity® and Unreal® Engine*1 . In this way, the Spatial Reality Display is ready to work with a large library of existing virtual reality content.

*1 Recommend use of "high resolution, quality images" created using Unity or Unreal Engine 4 software. Computer required with a recommended CPU of Intel Core i7-9700K @3.60 GHz or faster; and a graphics card such as NVIDIA GeForce RTX 2070 SUPER or faster. Only Windows 10 (64-bit) is supported.

Advantages

Unique in configuration and brimming with proprietary technology, Sony’s Spatial Reality Display empowers you to see what could never be seen before. For designers, researchers and content creation professionals, the benefits are profound.

-

Glasses-free Stereoscopic 3D of exceptional quality. Some 3D displays are prone to Left/Right ghosting, also known as crosstalk, where each eye sees some of the image intended for the other. The micro optical lens is meticulously engineered to minimize crosstalk. The result is a convincing 3D effect that has won rave reviews from discerning professionals.

One secret to the system’s convincing 3D effect is the extraordinary precision of the micro optical lens that directs left-eye and right-eye images.

One secret to the system’s convincing 3D effect is the extraordinary precision of the micro optical lens that directs left-eye and right-eye images. -

Down-to-the-millisecond response to head motion with six degrees of freedom. The Spatial Reality Display sustains the powerful illusion of a solid volumetric scene by adjusting the view as you move your head. The system senses and smoothly responds to your head motion with six degrees of freedom. There are three axes of motion: up/down, left/right and forward/back up to the limits of the display’s viewing “cone.” Within limits, the display can also accommodate roll (tilting your head left and right), pitch (tilting your head up and down) and yaw (pivoting your head left and right). Time delay (latency) between your head motion and the resulting display adjustment would degrade the illusion. For maximum effect, the system operates in real time, with millisecond response and high accuracy. Sony accomplishes these goals with hardware, firmware and software advances including a high-speed camera, sophisticated face detection and eye sensing technology and a proprietary real-time rendering algorithm.

The high-speed camera (left) provides down-to-the-millisecond data for face tracking and eye sensing.

The high-speed camera (left) provides down-to-the-millisecond data for face tracking and eye sensing. - High resolution. The Spatial Reality Display has an uncanny ability to convey object details and textures thanks to a 4K (3840 x 2160) native display panel featuring over 8 million pixels total. To optimize the 3D immersive experience, Sony applies a unique masked bezel edge. About half the pixels are delivered to each eye.

-

Viewing comfort and convenience. Where VR headsets isolate the user from the computer keyboard and input devices, the Spatial Reality Display functions as a secondary desktop monitor. It works alongside traditional 2D monitors empowering you to seamlessly transition between 2D and 3D evaluation. And while some users can experience nausea or discomfort with VR headsets, the Spatial Reality Display minimizes discomfort.

Content creation in Unity 2018.4.

Content creation in Unity 2018.4. - Accommodates existing workflows. The Spatial Reality Display will fit right in wherever professionals create 3D computer graphics. The system works with a Windows® 10 PC fitted with the CPU and GPU power typical of 3D computer graphics houses. Sony provides plug-in support for two of the world’s top 3D content production platforms: Unity and Unreal Engine. The ELF-SR1 can also display existing content already authored for Virtual Reality, either directly or with minor modification, depending on the content.

Applications

Since the first public demonstrations at CES 2020, the Spatial Reality Display has generated intense interest across many professions. Feedback from customer trials with evaluation samples has given us a preliminary glimpse at the potential markets.

- Automotive design. Car companies are recognizing the potential of this technology. “At Volkswagen, we've been evaluating Sony's Spatial Reality Display from its early stages, and we see considerable usefulness and multiple applications throughout the ideation and design process, and even with training," commented Frantisek Zapletal, Virtual Engineering Lab US, Volkswagen Group of America, Inc.

- Industrial design. Spatial Reality represents a completely new way for designers to preview their 3D creations. After working with a prototype, product designer So Morimoto said, “When I check my designs, I often change the angle of light to check the texture. I naturally tilt my head while I’m looking at things and what is amazing is that this display precisely reflects those natural movements.” Product designer and mechanical engineer Tatsuhito Aono said, “Even when working remotely like between Tokyo and Shanghai… we could share designs with this kind of presence.”

- Architecture. Architects are constantly striving to communicate 3D concepts. “This really feels like a step toward remotely communicating in shapes and being able to send ‘things’ to many different people,” said Keisuke Toyota, co-founder of noiz, an architecture practice in the Meguro section of Tokyo.

- Game development. Compatible with the Unity and Unreal Engine platforms that so many game developers are already using, the Spatial Reality Display is a natural fit for both conventional 2D and VR projects. You can readily evaluate 3D attributes without leaving your work environment.

- Entertainment/content creation. We sent a sample to The Mill, a leading production studio with offices in London, New York, Los Angeles, Chicago, Berlin and Bangalore. According to Dan Philips, executive producer of emerging technology, “You're literally looking at magic happen on the screen, wondering how it's working. Every single person I’ve seen observing this display is just like, ‘I’ve never seen anything like it.’” Sony Pictures Entertainment and Columbia Pictures subsidiary Ghost Corps worked on Ghostbusters: Afterlife. Says Eric Reich, brand and franchise executive at Ghost Corps, “The display offers a new approach to visualizing concepts and characters, making understanding the finished product that much easier.”

- cientific visualization. Whether you’re trying to understand complex proteins, mineral deposits, or structural stress, there are applications where two dimensions just aren’t enough. The Spatial Reality Display enables powerful 3D visualization without the need for cumbersome VR headsets or the complexity of CAVE automatic virtual environments.

Why Sony?

In the words of company co-founder Akio Morita, “We do what others don’t.” The Spatial Reality Display is an expression of that ambition. Of course, the display also reflects Sony’s technological leadership in three fields.

- A leader in displays from the production monitors on Hollywood soundstages to the award-winning critical evaluation monitors in color grading suites to retail and corporate digital signage to home televisions.

- A leader in image sensing. The system takes advantage of a proprietary high-speed vision sensor.

- A leader in computer generated image processing in generation after generation of PlayStation® consoles, leading up to PlayStation 5.

The Spatial Reality Display represents a first-of-its-kind integration of all these world-class technologies. This is a compelling 3D experience you won’t find anywhere else.

Appendix 1: 3D depth perception and the Spatial Reality Display

As most professionals reading this document know, humans rely on depth perception every time we drive a car, reach for a pencil or hit a tennis ball. Since depth perception is so fundamental, it takes advantage of a broad range of visual cues. These include many cues evident in 2D monitors and projectors and even visible in Renaissance painting: occlusion, relative size and aerial perspective. 2D moving pictures provide another cue: camera motion parallax.

To appreciate the Spatial Reality Display, it pays to examine three additional cues that are crucial to depth perception and then see how different display technologies succeed or fail to employ them.

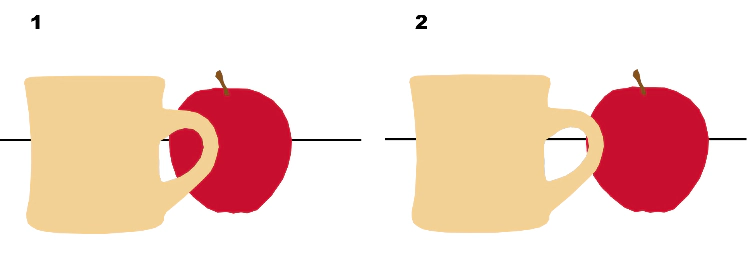

| Binocular disparity. Thanks to the spatial separation between eyes, the left eye image is slightly different from the right. This one cue is solely responsible for the difference between 2D and 3D cinema presentation. In this conceptual view of superimposed left and right images in 3D cinema, the spoon has disparity to appear in front of the screen, the mug has zero disparity to appear at the screen and the apple has negative disparity to appear behind the screen. |

|

| Head motion occlusion. In real life, your motion changes the way foreground objects obstruct background objects. Scene 2 simulates moving your head to the right, revealing more of the apple. |

|

| Head motion parallax. It’s not just occlusion. Scene 2 simulates moving your viewpoint up and to the right. This shifts the entire perspective and reveals the top of the mug. |

|

When we compare how different display types employ these depth cues. It’s clear that Sony’s Spatial Reality Display addresses the same cues as Virtual Reality headsets and CAVE automatic virtual environments.

| 3D display types | |||||

|---|---|---|---|---|---|

| Visual Cue | Stereoscopic 3D monitors & projectors | Commercially available multi-view 3D monitors | VR headsets | CAVE automatic virtual environments | Sony’s Spatial Reality Display |

| Binocular disparity | Yes | Yes | Yes | Yes | Yes |

| Horizontal head motion occlusion | - | Yes | Yes | Yes | Yes |

| Vertical head motion occlusion | - | - | Yes | Yes | Yes |

| Forward/backward head motion occlusion | - | - | Yes | Yes | Yes |

| Horizontal head motion parallax | - | Yes | Yes | Yes | Yes |

| Vertical head motion parallax | - | - | Yes | Yes | Yes |

| Forward/backward head motion parallax | - | - | Yes | Yes | Yes |

Appendix 2: Sony’s Spatial Reality versus other volumetric 3D displays

VR headsets

VR headsets have gained traction for gaming and selected consumer and professional applications. For all their advantages, they place the user into an isolated environment divorced from the working world. You need to take off the headset to accomplish everything from most design tasks to answering emails and picking up the phone. The Spatial Reality Display acts like a second computer monitor, enabling you to instantly switch from 3D evaluation to all the other tasks that make up your day.

In addition, some users report a degree of discomfort while using VR. The Spatial Reality Display minimizes these issues.

CAVE automatic virtual environments

CAVE automatic virtual environments continue to be the gold standard in 3D viewing. We’re proud that leading CAVE installations have selected Sony’s SXRD® 3D video projectors. However, CAVE viewing is not for everyone. You need a dedicated room – a substantial investment in real estate – in addition to the cost of multiple projectors, displays and CGI computers. CAVE also requires meticulous engineering, precise installation, careful calibration and 3D glasses. While CAVE is ideal for well-endowed research facilities, it’s out of reach for everyone else.

In addition, CAVE, like VR headsets, is not a good fit with the everyday work environment. While Spatial Reality literally fits on a desk, CAVE viewing takes researchers away from their desks altogether.

Multi-view 3D monitors

At first glance, Sony’s Spatial Reality Display appears to serve the same function as commercially available multi-view 3D monitors. A deeper dive reveals important differences and suggests substantially different applications and possibilities. While Sony’s Spatial Reality Display serves one user at a time, multi-view 3D monitors can serve several users simultaneously. Naturally, this capability involves significant tradeoffs.

- Step-wise rendering. According to Sony’s survey of commercial products as of February 2021, multi-view displays typically offer 45 views. In comparison, the PC in Sony’s Spatial Reality system can render literally hundreds of views, each precisely calculated for the user’s exact position. With just 45 views, the image changes in discrete steps as you move your head. Step-wise rendering can be moderated by allowing each eye of the user to see two or more adjacent views, but that entails considerable blurring on parts of the image that extend in front and behind the display’s “zero parallax plane.” Step-wise rendering can also be moderated by intentional blurring during content creation. But once again, this sacrifices image detail.

- Limited resolution. Using the example of 45 views, each user typically sees 2/45 of the display’s native resolution. This means as much as 95% of the display pixels are devoted to views you’re not seeing. The engineers of multi-view 3D displays thus face a challenging tradeoff. On one hand, they can maximize resolution. On the other, they can provide lots of views. They can’t do both.

- No response to up/down or forward/back head motion. According to Sony’s survey of published claims, you cannot change your view of the object by moving vertically, toward the display or away. The view changes in response to side-to-side head movement only.

These differences suggest that the Spatial Reality Display and multi-view 3D monitors offer substantially different performance.

Specifications

| DIMENSIONS AND WEIGHT | |

|---|---|

| DIMENSIONS | Approx. 15-1/8 x 9-1/4 x 9-1/8" (383 x 232 x 231 mm) 15-1/8 x 9-1/4 x 9-3/4" (383 x 232 x 247 mm) (including accessories) |

| WEIGHT WITH ACCESSORIES | Approx. 10.8 lbs. (4.9 kg) |

| WEIGHT WITHOUT ACCESSORIES | Approx. 10.2 lbs. (4.6 kg) |

| SENSOR | |

| HIGH-SPEED VISION SENSOR | Eye-sensing vision sensor built in |

| VIEWING DISTANCE | 12–30 inches (30 cm–75 cm) |

| VIEWING ANGLE | Up 20 degrees, down 40 degrees, left and right 25 degrees; Landscape use only |

| NUMBER OF USERS AT A TIME | One*2 |

| DISPLAY | |

| SIZE (MEASURED DIAGONALLY) | 15.6 inches |

| LUMINANCE | 500 nits |

| CONTRAST | 1400:1 |

| GAMUT | Adobe RGB approx. 100% |

| COLOR TEMPERATURE | 6500K |

| PANEL RESOLUTION | 3,840 x 2,160 pixels*3 |

| AUDIO | |

| SPEAKERS | 5.5 W (1.5 W + 1.5 W + 2.5 W) |

| CONNECTIVITY | |

| HDMI INPUT | 1 |

| CONTROL TERMINAL | 1 (USB 3.2 Gen1 Type-C) |

| POWER | DC IN, 12V 2.0A |

| POWER AND ENERGY SAVING | |

| POWER CONSUMPTION (ON) | 24 W |

| POWER CONSUMPTION (STANDBY) | 0.5 W |

| ENVIRONMENT | |

| ILLUMINANCE | 100 lux—1,000 lux with a minimum of 100 lux on the user’s face to support the eye sensing camera |

| TEMPERATURE | 32–104 °F (0–40 °C) |

| HUMIDITY | 20–80 % (No condensation) |

| SUPPORTED GAME ENGINES | |

| Unity | 2019.4 (recommended), 2018.4, 2020.3 |

| Unreal Engine 4: | 4.25 (recommended), 4.24, 4.26 |

| LANGUAGE | |

| DISPLAY LANGUAGE | English/French |

| PC SOFTWARE | English/French |

| ACCESSIBILITY | |

| TEXT TO SPEECH | Yes |

| WHAT'S IN THE BOX | |

|

AC adapter (1) Power cord (1) USB Type-C cable (1) HDMI cable (1) Warranty card (1) Instruction manual (1) Side panels (2) (left and right) Top flange (1) Bottom stand (1) Cleaning cloth (1) |

|

| PC SYSTEM REQUIREMENTS | |

| OPERATING SYSTEM | Windows® 10 (64-bit) |

| PROCESSOR | Intel® Core i7-9700K @3.60GHz or faster (8 cores or more) (Benchmark: Geekbench score >7000) |

| GPU | NVIDIA® GeForce® RTX 2070 SUPER or faster (Benchmark: 3DMark score (Fire Strike) > 25,000) |

| RAM | 16.0GB or more |

| STORAGE | Content-dependent |

*2 Eye sensing may not always work as intended depending on viewing conditions.

*3 Pixels close to the bezel are masked for a better 3D experience. A little pattern of lines may appear depending on conditions and content, due to the display structure.